Complex forecast topic: See how that startup is growing into public company

Mission: We are on a mission to push AI forward, to serve the open community and our enterprise customers. We are committed to driving the AI revolution by developing open-weight models that are on par with proprietary solutions. Stay tuned as we continue to advance in the field of AI.

☑️ #9 May 9, 2024

French AI startup taking on Silicon Valley is set to stuff its war chest

wsj.com: [Excerpt] Mistral AI is close to raising roughly $600 million, nearly tripling its valuation to $6 billion, from investors including General Catalyst and Lightspeed.

The company is also relatively lean, with roughly 60 employees, compared with the teams of hundreds that built AI models at Meta and OpenAI. Although Mistral is still small compared with rivals, investors have been impressed by the company’s efficiency and ability to build competitive technology.

As of December, Mistral had raised just over $500 million from investors, while also committing to sell small stakes to companies involved in AI, including chip maker Nvidia and business-software companies Microsoft and Salesforce. Microsoft also has a deal to distribute Mistral’s AI tools to users of its Azure cloud-computing platform. Some Mistral models are also available on the cloud platforms operated by Google and Amazon.com.

While Mistral is a French company, some of the biggest investors in the new fundraising round are American.

🙂

☑️ #7 Feb 26, 2024

Microsoft and Mistral AI announce new partnership to accelerate AI innovation and introduce Mistral Large first on Azure

azure.microsoft.com: [Transcription] [Excerpt] Today, we are announcing a multi-year partnership between Microsoft and Mistral AI, a recognized leader in generative artificial intelligence. Both companies are fueled by a steadfast dedication to innovation and practical applications, bridging the gap between pioneering research and real-world solutions.

🔹Related content:

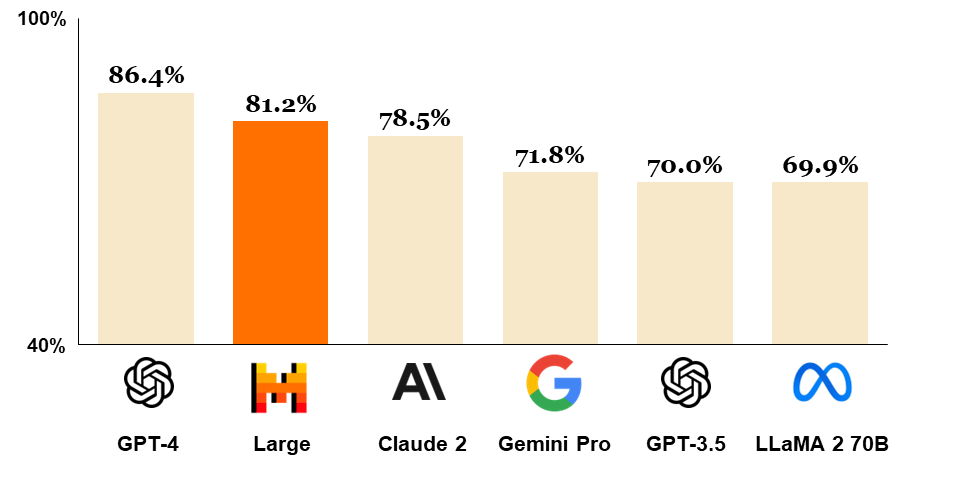

Mistral Large: Mistral Large is our flagship model, with top-tier reasoning capacities. It is also available on Azure.

🙂

☑️ #6 Dec 21, 2023

Welcome Mixtral - a SOTA Mixture of Experts on Hugging Face

huggingface.co: [Transcription] [Excerpt] Mixtral 8x7b is an exciting large language model released by Mistral today, which sets a new state-of-the-art for open-access models and outperforms GPT-3.5 across many benchmarks. We’re excited to support the launch with a comprehensive integration of Mixtral in the Hugging Face ecosystem 🔥!

🙂

☑️ #5 Dec 9, 2023 🔴 rumor

French AI start-up Mistral secures €2bn valuation

ft.com: [Transcription] [Excerpts] Eight-month-old group set to close roughly €400mn funding round as early as Friday, in new deal lead by Andreessen Horowitz.

French artificial intelligence start-up Mistral has been valued at €2bn in a blockbuster funding round set to close as early as Friday, becoming the latest beneficiary of the investor frenzy to buy into the world’s hottest AI companies.

The Paris-based company has secured the lofty valuation through new investment led by prominent Silicon Valley venture firm Andreessen Horowitz, according to multiple people with knowledge of the talks. Others involved in the funding round include tech giants Nvidia and Salesforce, French bank BNP Paribas and US venture capital firm General Catalyst.

Two people said the size of the new round was worth roughly €400mn, comprised mostly of equity with a smaller convertible debt component. The deal is expected to be signed shortly, with an announcement due next week.

The €2bn valuation, which includes the money raised, represents a substantial increase from June, when the weeks-old group raised €105mn at a €240mn valuation in a deal led by Lightspeed Venture Partners.

💲 Money follows…

⇢ Mistral Stock pricing: Want Mistral AI price alerts?

Mistral AI has 11 investors. 1 investor have led 1 Mistral AI funding round.The Seed round was led by Lightspeed. Other notable Mistral AI investors include Headline, Sofina, EXOR and LocalGlobe.

Lightspeed | Lead | Headline | Sofina | EXOR

⇢ Mixtral of experts. A high quality Sparse Mixture-of-Experts (12/11/23)

⇢ Mistral 7B. The best 7B model to date, Apache 2.0 (9/27/23)

⇢ Business model plans: Cost efficient training and inference platform.

☑️ #4 Nov 17, 2023

ai-pulse.eu: Europe's Premier AI Conference.

@Scaleway: It's time for an era of "change right here, right now." Our CEO, Damien Lucas, explains how we'll help the European #AI ecosystem to grow into a global force & why #Scaleway is the ideal cloud partner to power this new wave of innovation.

⇢ Mistral AI > 6:50:20

🔹Related content:

16:50 - 17:20 Mistral AI's Open Source Initiative: Ambitions, approaches, and roadmap ahead

Understand the practical impact of their architecture choices for their groundbreaking Mistral 7B model.

🙂

☑️ #3 Sep 27, 2023

Bringing open AI models to the frontier

mistral.ai: [Transcription] [Excerpt] Why we’re building Mistral AI.

In the coming months, Mistral AI will progressively and methodically release new models that close the performance gap between black-box and open solutions – making open solutions the best options on a growing range of enterprise use-cases. Simultaneously, we will seek to empower community efforts to improve the next generations of models.

As part of this effort, we’re releasing today Mistral 7B, our first 7B-parameter model, which outperforms all currently available open models up to 13B parameters on all standard English and code benchmarks. This is the result of three months of intense work, in which we assembled the Mistral AI team, rebuilt a top-performance MLops stack, and designed a most sophisticated data processing pipeline, from scratch.

🔹 Related content:

🙂

☑️ #2 Jun 21, 2023

See the pitch memo that raised €105m for four-week-old startup Mistral

sifted.eu: [Transcription] [Excerpts] A small group of researchers know how to build LLMs at scale.

The deck opens with a description of how products like OpenAI’s ChatGPT (powered by large language models) have been developed by “a few small teams” globally. It says that the small number of people with experience of building large models like GPT-4 are “the limiting factor to creating new economic actors in the field”.

It adds that the team has the expertise to train highly efficient AI systems that will create the “strongest model for a given computational budget”. The founding team of Timothée Lacroix, Guillaume Lample and Arthur Mensch spent nearly two decades between them working in AI and machine learning at Meta and Google’s DeepMind.

One Mistral investor tells Sifted that a key reason for backing the team was the limited number of people with the kind of experience held by the founders.

🔹Related content:

drive.google.com: [Transcription] [Excerpts] Mistral.ai strategic memo. Generative AI is a transformative technology.

Infrastructure and data sources

Training a competitive model requires at least an exa-scale cluster for a few months. We intend to rent such capacity for a full year, to allow the development of both open-source and commercial models, with various capacities.

We have already negotiated competitive deals for renting computational power in Tier 1 cloud service providers (we are planning to reserve 1536 H100 starting in September, with a summer ramp up). As mistral.ai has a strong European grounding, we will also be working with both emerging European cloud providers as they grow their deep learning offers.

Having trained models at large-scale before has provided us know-hows that will allow us to gain a factor 10-100 in training efficiency compared to public methods — our founders and early employees know exactly what to do to train the strongest model for a given computational budget.

Our early investors are also content providers in Europe, and will open all necessary doors for acquiring high quality datasets on which our model can be trained and fine-tuned.

Access to major clients for usage exploration

The founding team is already organising business exploration with major French and European industrial actors. A small product-oriented team (6 people at the end of first year) will start developing leads while the technical team trains the valuable technological bricks. The model team will remain 100% focused on the hard technological brick to avoid distraction.

Business development will start in parallel to the first model family development, using the following strategy

● Focus on exploring needs with a large industrial actors, accompanied by third-party integrators that will be given full access to our best (non-open source) model.

● Co-design of products with a few small emerging partners that focuses on

generative-AI products.

Exploration with businesses will be used to drive the design of the second generation of models.

Roadmap - First year

We will train two generations of models, while developing business integration in parallel. The first generation will be partially open-source and rely on technology well mastered by the team. It will validate our competence near clients, investors and institutions. The second

5 model generation will address the significant shortcomings of current models to become safely and affordably usable by businesses.

Train the best open-source standard models

At the end of 2023, we will train a family of text-generating models that can beat ChatGPT 3.5 and Bard March 2023 by a large margin, as well as all open source solutions.

Part of this family will be open-sourced; we will engage the community to build on top of it and make it the open standard.

We will service those models with the same endpoints as our competitor for a fee to acquire third-party usage data, and create a few free consumer interfaces for trademark construction and first-party usage data.

Customize for business needs and differentiate

In the following six months, these models will be accompanied by semantic embedding models for content search, and multimodal plugins for handling visual inputs. Specialized models, retrained on negotiated high quality data-sources will be prepared.

Business development will start in parallel to the first model family development: we intend to have proof-of-concept integration by the end of Q1 2024.

On the technical side, during Q1-Q2 2024, we will focus on two major aspects that have been under-estimated by incumbent companies:

● Train a model small enough to run on a 16GB laptop, while being a helpful AI assistant

● Train models with hot-pluggable extra-context, ranging in the millions of extra words, effectively merging language models and retriever systems.

In parallel, training and fine-tuning datasets will be constantly enriched through partnerships and data acquisition.

At the end of Q2 2024, we intend to

● be distributing the best open-source text-generative model, with text and visual output.

● own generalist and specialist models with one the highest value/cost

● have scalable and usable and varied APIs for serving our models to third-party integrators.

● have privileged commercial relations with one or two major industrial actors committed in using our technology.

🙂

☑️ #1 Jun 13, 2023

Meta and Deepmind alumni raise €105m seed round to build OpenAI rival Mistral

sifted.eu: [Transcription] [Excerpts] The company will be devoting a large chunk of the money towards compute power.

Paris-based AI lab Mistral has come out of stealth mode today, announcing a €105m seed round led by US VC Lightspeed, as the company lays out its plans to build a European competitor to OpenAI.

The founding team of Timothée Lacroix, Guillaume Lample and Arthur Mensch bring with them nearly two decades of combined experience working in AI and machine learning at Meta and Deepmind.

Other backers of Mistral include French billionaire Xavier Niel, former Google CEO Eric Schmidt and Rodolphe Saadé — CEO of French shipping company CMA CGM. VC investors including Motier Ventures, La Famiglia, Headline, Exor Ventures, Sofina and firstminute capital, and also joining the round are JCDecaux's family office and France’s public investment bank Bpifrance.